A customer calls their bank's support line. The voice on the other end sounds nearly human, but something's slightly off. The timing of pauses. The flatness in a phrase that should carry warmth. Within ten seconds, the customer is no longer focused on their account question. They're analyzing the voice, testing it with unexpected questions, planning how to get a real person on the line.

The bank's AI agent answers correctly. The customer escalates anyway.

This isn't a quality problem. It's a trust problem, and they're not the same thing.

Why AI Voice Trust Is Broken (And It's Not About the Voice)

The short answer: most AI voice deployments are designed to conceal rather than communicate, and customers notice. The trust deficit in voice AI isn't primarily caused by poor synthesis quality or limited vocabulary. It's caused by a fundamental mismatch between what the system knows about itself and what it shares with the user.

When customers feel like something's being hidden (the AI's identity, its data practices, its limitations) they go into defensive mode. Trust collapses not at the moment of a mistake, but at the moment of suspicion. And suspicion is triggered by omission as much as commission.

Think about what we've all been trained to expect from an unknown voice: banks have spent decades training customers to watch out for fraudulent calls. "Verify before you share anything" is now instinct. An AI agent that doesn't immediately, clearly establish what it is runs directly into that trained skepticism.

The answer isn't better voices. It's better honesty.

The Psychology Behind the Resistance

Users distrust AI voices because their brains are pattern-matching against "legitimate human" and flagging anomalies as threats. Understanding the specific mechanisms helps design around them.

The Uncanny Valley in Sound

The uncanny valley, originally described for visual representations of near-human faces, applies just as strongly to voice. When a synthetic voice lands in the zone that's almost-but-not-quite human, it triggers discomfort that's stronger than what users feel toward an obviously artificial voice.

Why? Because the brain interprets a near-human voice that's slightly wrong as potentially deceptive. "Something is pretending to be human." That's a much more alarming signal than "this is clearly a machine."

The practical implication is counterintuitive: an AI agent that leans into its AI identity (consistent tone, clear cadence, no attempt at emotional mimicry it can't sustain) often generates more comfort than one engineered to sound maximally human. The uncanny valley is a zone to avoid, not a target to approach.

Cognitive Load Diversion

When users suspect they're being deceived or that something's being hidden, they stop working on their task and start working on the uncertainty. They analyze intonation. They ask trick questions. They try to catch the system in an inconsistency.

This is a total lose-lose: the user doesn't accomplish what they called about, and the AI agent's metrics suffer even if it performed correctly. Escalation rates climb. First-call resolution drops. And none of it reflects the agent's actual capability.

The solution isn't better misdirection. It's removing the source of the suspicion.

The Loss-of-Control Response

Many customers feel their agency diminish when interacting with voice AI. They're not sure whether they can interrupt. They don't know if there's a human option. They worry about what's being recorded and how long it's kept.

These aren't irrational concerns. They're reasonable questions that most voice AI deployments fail to answer proactively. And when those questions go unanswered, users compensate by guarding what they share, which makes the AI less useful, which makes the interaction worse.

What Transparency Actually Looks Like

Transparency isn't a disclaimer you read at the start of the call. It's a design posture that runs through every interaction moment. Here's what it means in practice.

Immediate, Clear Identity Disclosure

The AI should identify itself as an AI in the first sentence. Not buried in option 3, not after the user asks. At the start, clearly.

"Hi, I'm an AI assistant for Meridian Bank. I can help with account questions, recent transactions, and password resets. What can I help you with today?"

This does three things at once: establishes AI identity, declares scope (the agent's capabilities), and moves immediately to the user's purpose. Users who receive this introduction are less likely to escalate, more likely to stay engaged, and more likely to trust responses, because they know what they're dealing with.

Contrast this with the common failure mode: an introduction that sounds human, gives no identity information, and asks an open-ended question. That's a guarantee of suspicion.

Process Narration

When the AI is doing something (looking up an account, processing a request, checking availability) it should say so. Not as filler, but as genuine transparency about what's happening.

"Let me pull up your last three transactions. One moment."

"I found something unusual in your account history. I'm going to flag this for our fraud team and can give you a reference number."

"I'm not sure I understood that. Let me try a different approach."

This narration does something powerful: it makes the AI's reasoning legible. Users who can follow what the system is doing feel in control, even when the system is doing the work. That sense of legibility is trust.

Proactive Limitation Disclosure

This is the one most teams get wrong. The temptation is to wait until the user hits a wall before explaining the agent can't help. But by then, trust is damaged. The user wasted time, hit a dead end, and had to start over.

Better: surface limitations before they become problems.

"I can help with most billing questions, but for disputes over $500 I'll need to transfer you to a specialist who can authorize changes directly."

Users forgive limitations they were told about. They do not forgive surprises. The disclosure doesn't make the AI look weak. It looks honest, which is more valuable.

Data Transparency as a Trust Lever

Many customers are genuinely uncertain what happens to their voice data. Is this call recorded? For how long? Who hears it? When the AI doesn't address this, users fill the gap with the worst-case assumption.

A simple disclosure, offered once, changes the dynamic: "This call may be recorded for quality and training purposes. You can request deletion of your interaction data by visiting our privacy page."

It sounds mundane. But for customers who've been burned by opaque data practices elsewhere, it's a meaningful signal that this organization is operating in good faith.

UX Principles That Build Trust Across Interactions

Beyond what the AI says, how the interaction is designed matters enormously. Trust is a product of accumulated small experiences, each one either adding to or subtracting from a balance.

Consistency Creates Predictability, and Predictability Creates Comfort

An AI agent that behaves consistently (same voice, same intro, same patterns for handling misunderstandings) becomes predictable. Predictable systems feel trustworthy because users know what to expect.

The failure mode here is inconsistency: different behavior in different contexts, surprising response patterns, sudden tonal shifts. Even if each individual response is fine, the unpredictability erodes confidence.

This is one reason it's worth testing your AI agent across diverse scenarios before shipping. You need to know whether the agent behaves consistently when topics shift, emotions run high, or questions go outside its training.

Graceful Error Recovery

Every AI agent makes mistakes. Trust isn't built by being error-free. It's built by handling errors well.

The anatomy of a trust-building error recovery:

- Immediate acknowledgment: "I didn't quite catch that."

- No blame assignment: don't imply the user spoke unclearly

- Constructive next step: offer a different approach or a transfer option

- No repetitive loops: if the third attempt fails, proactively offer escalation

What destroys trust is the opposite: repeated failures with no acknowledgment, apologetic loops that lead nowhere, or worst of all, confident wrong answers. Saying "I don't know but here's how we can find out" is infinitely better than confidently providing incorrect information.

The Escalation Path as a Trust Signal

How easy it is to reach a human sends a powerful signal about how much the organization trusts its own AI agent.

If escalation is buried in option menus, requires repeating "human agent" three times, or results in a long hold, customers conclude the AI is being used to avoid accountability. If escalation is immediate and graceful ("Connecting you now, and I'll pass along what we've discussed so you don't have to repeat it"), customers conclude the organization is using AI to improve service, not to cut corners.

The handoff moment is a trust moment. Invest in it.

Emotional Calibration, Not Emotional Performance

Voice AI can recognize emotional signals in customer speech (frustration, urgency, confusion) and should respond accordingly. But there's a distinction between calibrating tone and performing emotion.

Calibrating: slowing down, simplifying language, expressing genuine acknowledgment ("I understand this is frustrating, let me see what I can do") when a customer is clearly upset.

Performing: scripted empathy phrases delivered in the wrong moment, hollow apologies that don't lead to anything, sympathy that's obviously mechanical.

The first builds trust. The second undermines it because customers can feel the mismatch between the words and the experience.

The Human-AI Handoff as a Trust Architecture Choice

Warm handoffs, where the AI summarizes context and introduces the human agent, dramatically outperform cold transfers on every trust metric. This isn't just a nice-to-have; it's a design choice that signals organizational coherence.

When a customer is transferred and the human agent says "I can see you were asking about your account balance and noticed an unusual transaction, let me take a look at that for you," the customer's experience of the entire system improves. Both the AI and the human look more competent. The organization looks like it has its act together.

When the customer is transferred and has to start over, both the AI and the human look bad. The customer's perception of the whole interaction, including the parts the AI handled correctly, is dragged down.

Context preservation during handoff is a structural trust investment, not a feature. Teams building voice AI should design for this from the start, not retrofit it later.

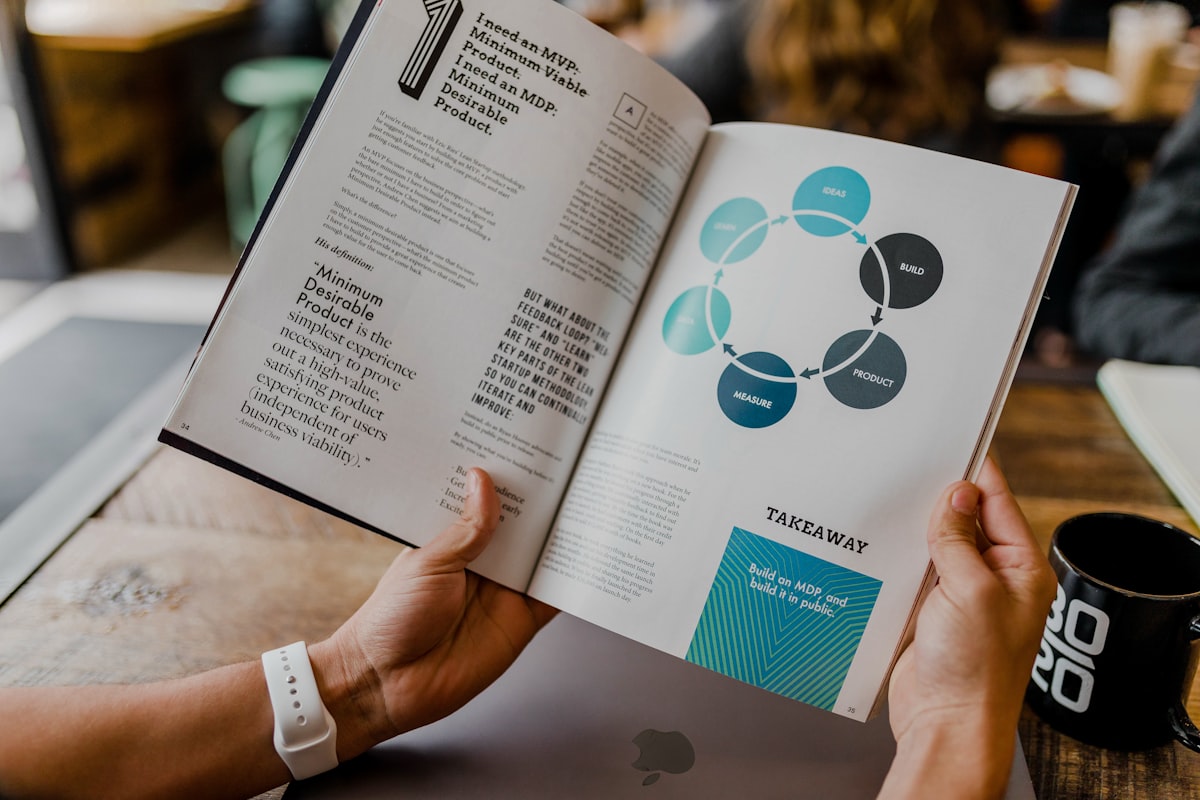

Measuring Whether You Have a Trust Problem

The clearest signal of a trust problem is a gap between accuracy and escalation. If your AI agent answers correctly most of the time but users still request humans at high rates, trust is the issue, not capability.

Other signals to watch:

Escalation rate by topic: Some escalation is appropriate, since complex issues genuinely need humans. But if routine topics (balance inquiries, status checks) are escalating at high rates, users aren't trusting the AI to handle things it clearly can.

Mid-conversation abandonment: Users hanging up mid-interaction, especially before the AI has had a chance to answer, suggests the interaction style itself is creating friction.

Task completion vs. sentiment: You can track whether tasks complete successfully and how users rate the experience. When completion is high but satisfaction is low, users completed their task despite the experience, not because of it. That gap usually closes when trust closes.

Scorecard-based evaluations that assess tone, disclosure quality, and handling of unexpected questions can surface trust failure patterns that raw completion metrics miss entirely.

The most rigorous approach is testing with simulated personas before going live, particularly skeptical or anxious customer profiles, so trust problems surface in testing rather than in production. Platforms like Chanl let you run these scenario-based evaluations against your agent systematically.

What Changes When You Get This Right

Teams that invest in voice AI trust (not voice quality, but transparency and UX) see consistent downstream effects:

Escalation rates drop, not because customers can't escalate but because they stop feeling like they need to. They get what they called about.

Task completion climbs. Users who trust the system share more accurate information and follow through on the process rather than abandoning it.

Agent reputation improves over time. When customers have consistently trustworthy experiences, the anxiety of future calls drops. They arrive already expecting it to go well.

And that last one is the compounding return: trust earned today lowers the cost of every future interaction. Suspicion earned today raises it.

Build AI agents customers actually trust

Chanl helps you test how customers respond to your AI agent's voice, tone, and transparency signals, before you ship to production.

Start freeThe Short Version

Customers don't distrust AI voices because the technology is bad. They distrust them because most deployments are designed to obscure things customers have a legitimate right to know: what they're talking to, what it can do, what happens to their data, and when a human is available.

Fix the concealment and the trust deficit largely fixes itself. Disclose identity immediately. Narrate the process. Surface limitations before they become failures. Make escalation easy and context-preserving.

The goal isn't a voice that sounds more human. It's an agent that behaves more honestly. Those are completely different design objectives, and only one of them actually earns trust.

Co-founder

Building the platform for AI agents at Chanl — tools, testing, and observability for customer experience.

Aprende IA Agéntica

Una lección por semana: técnicas prácticas para construir, probar y lanzar agentes IA. Desde ingeniería de prompts hasta monitoreo en producción. Aprende haciendo.